The latter is harder to answer and perhaps unethical as most people do not want to broadcast how secure their database is anyway. The first part is more interesting. We cannot say a specific database is 18% secure or 19% or 60% as the answer will always be qualified by the database itself, how its managed, how it can be accessed and also lots of other related factors, such as other company security, network security, the applications that access the database and much more.

For example if we have an account ORABLOG and its password is very secure, 30 characters long even; profiles are enabled demanding the password to be changed every thirty days, verify functions enforce the rules of the strong password and much more. If we also employ a number of other tools, perhaps a log on trigger that ensures that ORABLOG can only use used from a particular IP Address where the application is deployed or maybe we also use ip chains and valid node checking and much more. We can say that the account is secure BUT this does not prevent SQL Injection attempts from the application to the database or someone taking over the application server and "pretending" to be ORABLOG (and the application). Is the database secure? - is the issue the application?, is it server security? is it application security? - This is hard to assess in a particular case with particular circumstances let along generally as a principle. Should be detach nn% secure database from the surrounding elements (applications, network, people, admin users...). We can never say for sure a database is 60% secure or 17% secure or whatever because we need context and further details.

BUT, if we have a security standard for our company for Oracle databases then we could state whether a particular Oracle database is nn% secure against that policy. This is then defined and measurable. If there are for instance 30 checks, some high severity and some lower then we can also add more weight to the checks that are higher severity and less weight to the lower ones. This can then scale the percentage. The true measure is the number of checks that are failed against those that are not.

We have added this feature to PFCLScan in the latest version. We had an average score previously but that score was a range from 1 - 4 so much less scientific. That previous score was also embedded into the report tool - i.e. the calculation was written into the tool. In the latest version 1.7 we have added the ability to do the calculation in the report template itself. The old way simply had one variable you placed in your template that gave a score now you do the calculation using PFCLScan report variables. This is much better as you can now change the calculation if you wish by changing the variables used or change the weights applied or whatever you wish.

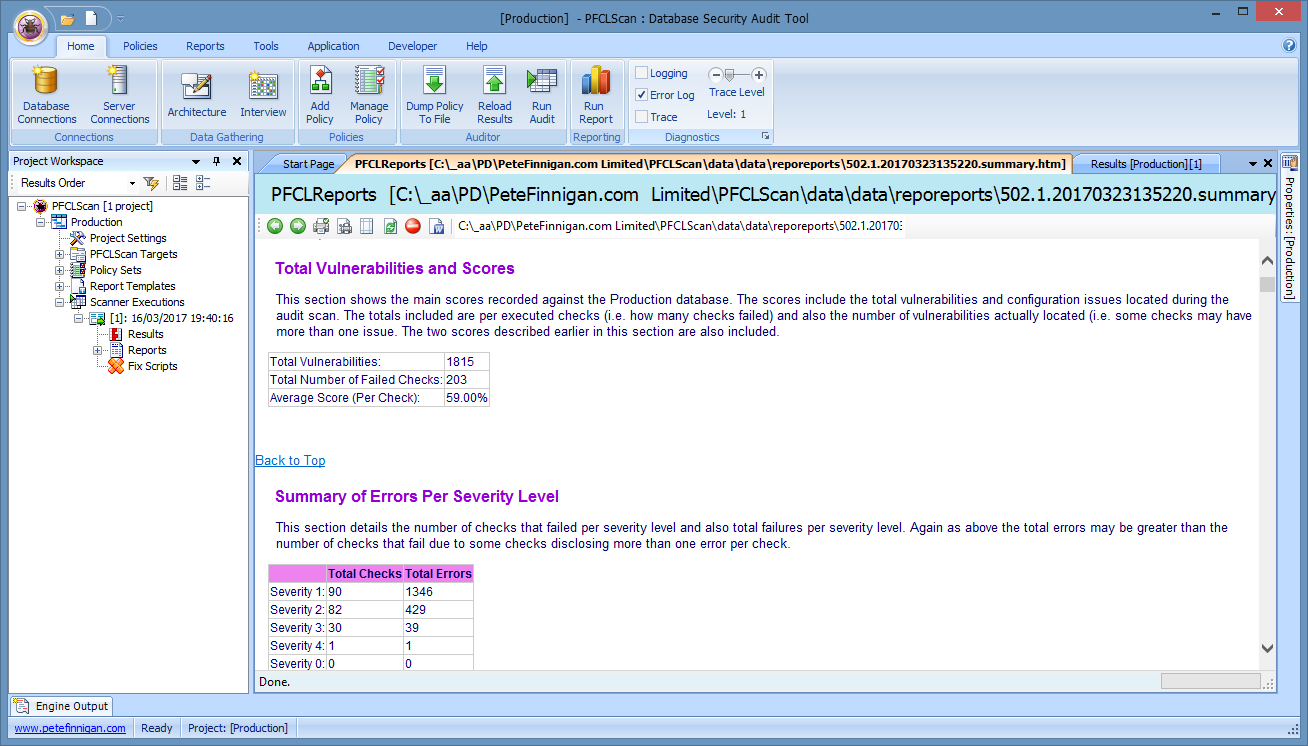

The score is shown here in a report I have ran after scanning an 11.2.0.4 Oracle Database

The average score (per check) is 59%. This means that 59% of the checks are secure in this database. If I fix something in this database and run the scan again and generate a new report then that percentage would increase. If I undid some of the security work then the percentage would go down. This percentage is a relative score against a standard -In this case the checks that are ran against the database. You can change this score to match your own security policy easily by calculating it only against checks that match your security standard. You can then re-scan the database regularly and graph the changes easily in score against the standard.

Remember this is a score based on whats checks but that is valuable to allow us to check compliance against each database and compare them and also to allow a single database to be scored over time as security work is done.

What to know more then ask us about PFCLScan.